Enhancing Intelligent Tutoring Systems with Instruction-Tuned LLMs: Automated Assessment of Student Code Comprehension

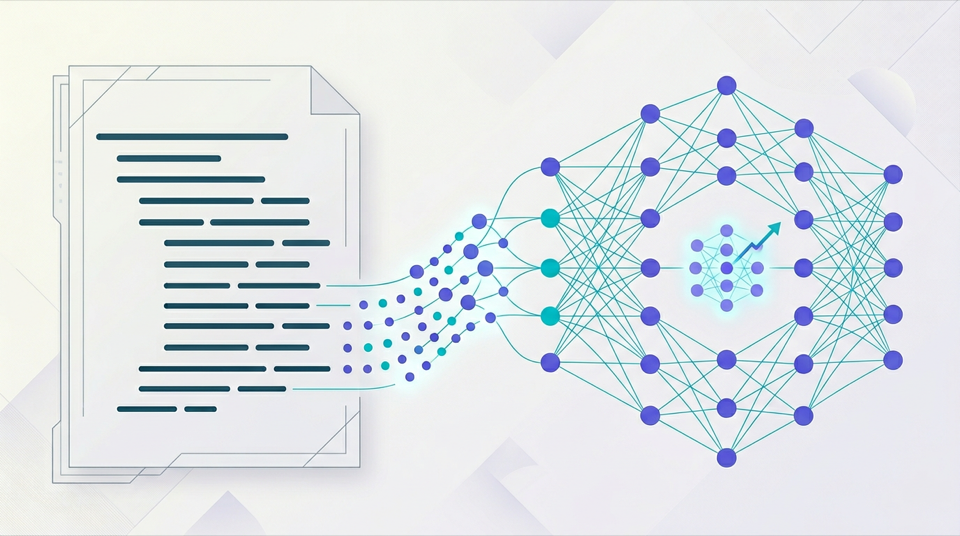

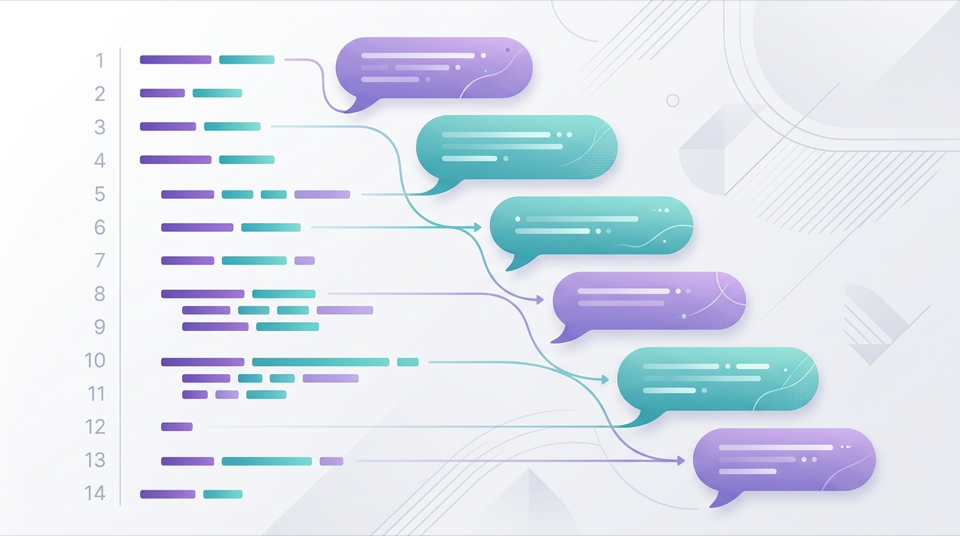

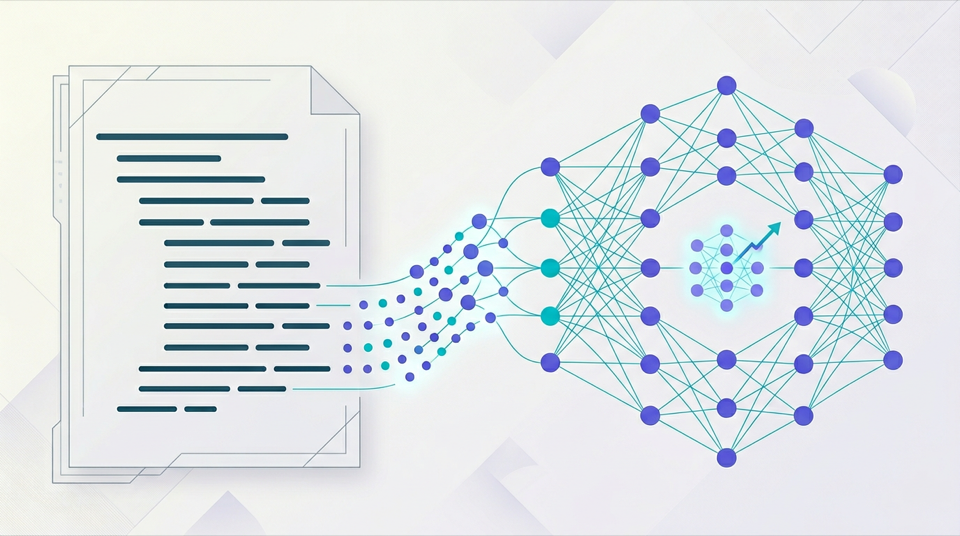

Evaluating students’ natural-language explanations of code is challenging for real-time tutoring feedback. This paper fine-tunes open-source LLMs to automatically assess line-by-line Java code explanations, achieving strong correlations with human judgments and outperforming few-shot prompting baselines.